"What is intelligence?" It is arguably the oldest question in philosophy and, as of 2026, the most consequential question in technology. For millennia, thinkers from Aristotle to Descartes debated whether intelligence was a divine spark, a mechanical process, or something else entirely. Today, the debate has shifted from the lecture hall to the data center. We have built machines that write poetry, prove theorems, and beat world champions at games once thought to require "genius."

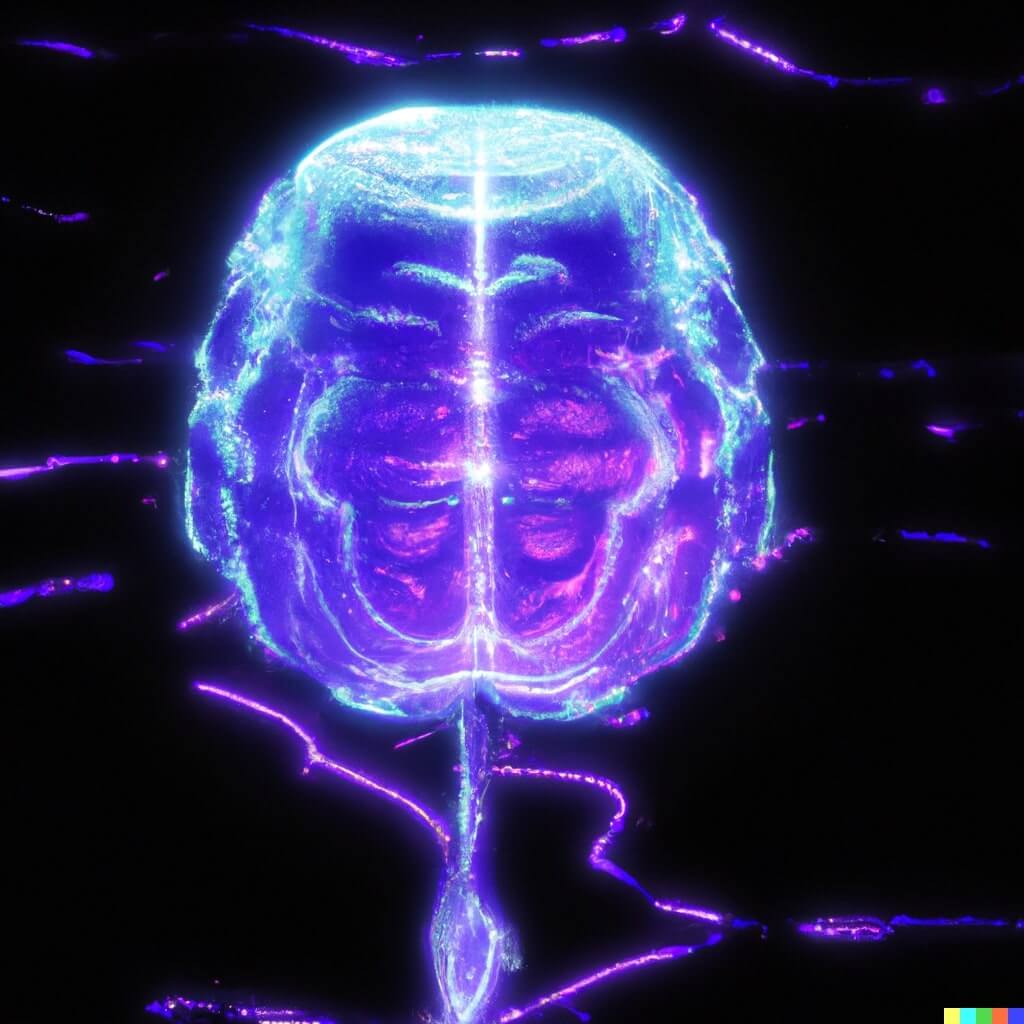

As Blaise Aguera y Arcas — VP at Google Research and author of What Is Intelligence? (MIT Press, 2025) — puts it: "Life and intelligence are the same thing. They are both computational." That statement bridges 3.8 billion years of biological evolution with 80 years of computer science. From the 86 billion neurons in your brain to the 2 trillion parameters in frontier large language models, intelligence is the thread connecting it all.

This guide traces the complete arc of intelligence — from the molecules inside your cells to the AI agents reshaping how we work. Whether you are a developer, a founder, a researcher, or simply curious about the nature of mind, this is the map.

TL;DR: Intelligence is the capacity to learn, reason, and act in pursuit of goals. Biological brains do it with 86 billion neurons; AI does it with neural networks and trillions of parameters. In 2026, frontier models pass the Turing test, AI agents autonomously execute multi-hour tasks, and the line between "real" and "artificial" intelligence is blurring fast. Build with AI agents in Taskade →

🧠 What Is Intelligence?

Intelligence is the capacity to acquire knowledge, reason about it, and apply it to achieve goals across diverse and changing environments. This definition spans biology, psychology, computer science, and philosophy — and it applies equally to a human solving a crossword puzzle and an AI agent debugging a codebase.

Three pillars underpin every form of intelligence we have observed:

- Learning — acquiring information from experience, instruction, or observation

- Reasoning — drawing inferences, spotting patterns, and making decisions under uncertainty

- Acting — translating knowledge into goal-directed behavior in the real world

What separates intelligence from mere computation is generalization. A calculator can multiply numbers, but it cannot learn to play chess. A chess engine can play chess, but it cannot write a sonnet. A truly intelligent system — biological or artificial — transfers knowledge across domains.

Properties of Intelligence Across Substrates:

| Property | Biological (Human Brain) | Artificial (LLMs / Agents) | Artificial Life (Cellular Automata) |

|---|---|---|---|

| Learning | Synaptic plasticity, experience | Gradient descent, fine-tuning | Evolutionary selection |

| Memory | Hippocampus, long-term potentiation | Context windows, vector stores | Inherited structure |

| Reasoning | Prefrontal cortex, working memory | Chain-of-thought, tree search | Emergent rule following |

| Adaptation | Neuroplasticity, immune system | Transfer learning, RLHF | Mutation, self-replication |

| Communication | Language, gesture, writing | Text generation, tool calls | Chemical signals |

| Substrate | Carbon-based neurons | Silicon-based transistors | Digital or biochemical |

| Energy | ~20 watts | ~1 megawatt (training) | Negligible |

The most striking insight from this table: intelligence is substrate-independent. It can emerge in carbon, silicon, or even abstract mathematical structures. What matters is the pattern, not the material.

🔬 Biological Intelligence: How Brains Think

The human brain contains roughly 86 billion neurons connected by an estimated 100 trillion synapses — making it the most complex known structure in the universe. Every thought you have, every memory you recall, every sentence you read right now is the product of electrochemical signals racing through this network at up to 120 meters per second.

But how does raw biology produce something as intangible as "thought"?

The Mathematical Neuron (1943)

The story of artificial intelligence begins, ironically, with biology. In 1943, neurophysiologist Warren McCulloch and logician Walter Pitts published a landmark paper proposing that neurons could be modeled as simple logical gates. A neuron either fires or it does not — a binary switch. Chain enough binary switches together, and you can compute anything a Turing machine can.

This was a radical idea: the brain is a computer.

Hebb's Rule (1949)

Six years later, psychologist Donald Hebb proposed the learning rule that still underpins modern AI: "Neurons that fire together wire together." When two neurons activate simultaneously, the connection between them strengthens. This is how memories form, habits crystallize, and skills develop.

Hebb's rule is the biological ancestor of every weight-adjustment algorithm in deep learning. When an AI model trains on data, it strengthens connections that produce correct outputs and weakens those that do not. The math is different, but the principle is identical.

The Hierarchy of Biological Computation

Intelligence in the brain emerges through layers of increasing complexity:

THE BIOLOGICAL COMPUTATION STACK

Molecules → DNA, proteins, neurotransmitters

Cells → Neurons (86 billion), glial cells

Circuits → Neural networks (visual cortex, motor cortex)

Regions → Prefrontal cortex (planning), hippocampus (memory)

Systems → Perception, language, emotion, motor control

Consciousness → Subjective experience (??)

At the molecular level, your body runs quintillions of computations simultaneously. Every ribosome in every cell reads messenger RNA and assembles proteins — a fundamentally computational process. John von Neumann recognized this in the 1940s: cells are computers, and DNA is the program. Or as Aguera y Arcas frames it: "You cannot be a living organism without literally being a computer."

This is not a metaphor. Ribosomes are molecular Turing machines. They read an input tape (mRNA), follow a set of rules (the genetic code), and produce an output (proteins). The entire apparatus of life — from cell division to immune response to neural firing — is computation at different scales.

The brain is just the most spectacular example: a computer made of computers (cells) made of computers (molecules), running in massively parallel fashion, consuming only about 20 watts of power. No GPU cluster comes close to that efficiency.

Cognitive Maps: How Brains Navigate Knowledge

One of the most profound discoveries in neuroscience is that the brain represents knowledge the same way it represents physical space — as a navigable geometric map.

In the 1930s, psychologist Edward Tolman proposed that rats build internal "cognitive maps" rather than learning simple stimulus-response chains. Decades later, neuroscientists discovered the actual neurons that implement these maps. Place cells in the hippocampus fire when an animal occupies a specific location — each cell encoding "I am here." Grid cells in the entorhinal cortex fire in repeating hexagonal patterns across the entire environment, creating a coordinate system — the brain's internal GPS. Together with head direction cells, boundary cells, and object-vector cells, they form a layered spatial intelligence system.

THE BRAIN'S NAVIGATION STACK

┌──────────────────────────────────────────────────────┐

│ Object-Vector Cells "Cheese is 2m at 45°" │

├──────────────────────────────────────────────────────┤

│ Grid Cells Hexagonal coordinate grid │

├──────────────────────────────────────────────────────┤

│ Place Cells "I am at location X" │

├──────────────────────────────────────────────────────┤

│ Head Direction Cells "I am facing north" │

├──────────────────────────────────────────────────────┤

│ Boundary Cells "Wall is 1m to my left" │

└──────────────────────────────────────────────────────┘

│

▼

Same cells reactivate for ABSTRACT navigation:

comparing prices, evaluating social status,

reasoning about concept similarity

The revelation came when neuroscientists found that the hippocampus and entorhinal cortex use the same cells for abstract reasoning as for physical navigation. When humans navigate conceptual spaces — comparing product features, evaluating the similarity of ideas, or reasoning about social relationships — grid cells and place cells fire in the same patterns they use for walking through a room. The brain evolved spatial navigation first, then repurposed that machinery for thought itself.

This has a direct parallel in artificial intelligence. When large language models represent "king minus man plus woman equals queen" as vector arithmetic in embedding space, they are performing the same kind of abstract spatial navigation that hippocampal cells enable in biological brains. The brain uses factorized representations — separating structural information (where something is) from sensory information (what something looks like) — and there is growing evidence that transformers learn similar factorizations in their internal layers.

The convergence suggests something deep: geometric representation of knowledge may be a universal property of intelligence, regardless of substrate. Brains evolved it. Neural networks discovered it through gradient descent. The mathematics of navigation — coordinate systems, distance metrics, path integration — may be the mathematics of thought itself.

🤖 Artificial Intelligence: How Machines Learn

The leap from biological neurons to artificial ones took 15 years. In 1958, psychologist Frank Rosenblatt built the Perceptron — a physical machine (not just software) that could learn to classify images by adjusting the weights of its connections. The New York Times headline read: "New Navy Device Learns by Doing."

The core idea was elegant and has not changed since: take inputs, multiply them by weights, sum the results, and fire if the sum exceeds a threshold. Adjust the weights based on errors. Repeat.

From Perceptrons to Transformers — A Timeline:

| Year | Milestone | Intelligence Type | What It Proved |

|---|---|---|---|

| 1943 | McCulloch-Pitts neuron | Mathematical model | Neurons are logical gates |

| 1949 | Hebb's learning rule | Learning theory | Connection strength = memory |

| 1958 | Rosenblatt's Perceptron | Machine learning | Machines can learn from data |

| 1982 | Hopfield network | Associative memory | Physics of magnets → memory machines |

| 1986 | Backpropagation | Deep learning | Multi-layer networks can train |

| 1997 | Deep Blue beats Kasparov | Programmed intelligence | Brute force + expert rules = chess mastery |

| 2012 | AlexNet wins ImageNet | Learned intelligence | Deep learning beats hand-crafted features |

| 2016 | AlphaGo beats Lee Sedol | Emergent intelligence | Self-play discovers superhuman strategies |

| 2017 | Transformer architecture | Architecture breakthrough | Attention is all you need |

| 2020 | GPT-3 (175B params) | Emergent language | Scale produces capabilities |

| 2022 | ChatGPT launch | Public AI adoption | LLMs reach mainstream |

| 2023 | GPT-4, Claude 2 | Multimodal reasoning | Vision + language + code |

| 2024 | Hopfield & Hinton (Nobel Prize in Physics) | Physics-AI bridge | Neural networks rooted in statistical mechanics |

| 2024 | AlphaFold (Nobel Prize in Chemistry) | Scientific intelligence | AI solves protein folding |

| 2025 | Frontier models (OpenAI, Anthropic, Google) | Frontier reasoning | Multi-hour autonomous tasks |

| 2026 | AI agents in production | Autonomous intelligence | Agents plan, execute, and iterate |

Four milestones on this timeline deserve special attention because they represent fundamentally different kinds of artificial intelligence:

Deep Blue (1997): Programmed Intelligence

IBM's Deep Blue defeated world chess champion Garry Kasparov by evaluating 200 million positions per second using hand-coded chess knowledge. It was brilliant at chess and useless at everything else. Deep Blue did not "learn" — it followed rules written by human experts. This is programmed intelligence: powerful but brittle.

AlexNet (2012): The Scale Tipping Point

Built by Alex Krizhevsky, Ilya Sutskever (later co-founder of OpenAI), and Geoffrey Hinton, AlexNet won the ImageNet image recognition challenge by a massive margin — not because it used new ideas, but because it scaled 1980s ideas with 10,000x more compute via Nvidia GPUs. What it revealed was even more important: AlexNet's first layers learned to detect edges and color blobs (just like biological visual cortex), deeper layers assembled those into textures and faces, and the second-to-last layer produced 4,096-dimensional embeddings where similar concepts clustered together — elephants near elephants, guitars near guitars. This is the same embedding principle that powers every modern LLM. AlexNet was the moment AI stopped being hand-crafted and started being grown. See How Do LLMs Work? and What Is Mechanistic Interpretability? for the full story.

AlphaGo (2016): Learned Intelligence

DeepMind's AlphaGo defeated 18-time world Go champion Lee Sedol — a feat most AI researchers thought was a decade away. The pivotal moment was Move 37 in Game 2: a placement so unusual that commentators thought it was a mistake. It turned out to be a masterstroke that no human had considered in 3,000 years of Go play.

AlphaGo was not programmed with Go strategies. It learned them through millions of games of self-play, discovering patterns that transcended human understanding. This is learned intelligence: surprising and creative.

LLMs (2022+): Emergent Intelligence

Large language models like GPT-4 and Claude were trained to do one thing: predict the next token in a sequence. Nobody programmed them to write code, solve math problems, or engage in philosophical debate. (See How Do LLMs Work? for a technical deep dive.) These capabilities emerged from the training process — a phenomenon researchers still do not fully understand.

This is emergent intelligence: the most powerful and the most mysterious. And it is the kind that is reshaping the world right now.

The Intelligence Spectrum (2026)

Programmed Learned Emergent

◄──────────────────┼────────────────┼──────────────────►

Deep Blue AlphaGo Frontier LLMs

Chess rules Self-play Next-token prediction

coded by humans discovered → reasoning, coding,

Move 37 math, conversation

Narrow ◄──────────────────────────────────────► General

The arrow from left to right is not just a timeline. It is a trajectory toward generality. Deep Blue could only play chess. AlphaGo could only play Go. But today's frontier LLMs can write code, analyze images, draft legal contracts, debug Python, compose music, and explain quantum mechanics — all without being explicitly programmed for any of those tasks.

The Hidden Chapter: When Physics Became Intelligence

There is a milestone missing from most AI timelines — one that connects the story not to computer science, but to physics. In 1982, physicist John Hopfield realized that the Ising model of magnetism — a grid of atoms whose spins align to minimize energy — could be repurposed as a memory machine.

In the Hopfield network, artificial neurons replace atoms. Weighted connections replace magnetic coupling. And the system's tendency to minimize energy becomes a tendency to converge on stored patterns. Feed the network a noisy, incomplete version of a memory, and it relaxes — like a marble rolling downhill — into the nearest stored pattern. The network "remembers" by finding the lowest-energy configuration.

HOPFIELD NETWORK: MEMORY AS ENERGY MINIMIZATION

Energy

│

│ ╱╲ ╱╲ ╱╲

│ ╱ ╲ ╱ ╲ ╱ ╲

│ ╱ ╲ ╱ ╲ ╱ ╲

│ ╱ ╲ ╱ ╲ ╱ ╲

│ ╱ Memory╲ ╱ Memory ╲╱ Memory ╲

│ ╱ "A" ╳ "B" ╳ "C" ╲

│ ╱ ╲ ╱ ╲

│ ▼ ▼ ▼ ▼

└──────────────────────────────────────────

Configuration Space

Feed in noisy "A" → system rolls into Memory A valley

The network "remembers" by minimizing energy

Geoffrey Hinton extended this with the Boltzmann machine (1985) — named after the physicist who founded statistical mechanics — adding probabilistic learning borrowed from thermodynamics. Training a Boltzmann machine resembled a physical system cooling down: high temperature allows exploration (randomness), low temperature crystallizes into an ordered state (learned patterns).

On October 8, 2024, Hopfield and Hinton received the Nobel Prize in Physics for this work. Not the Turing Award. Not a computer science prize. Physics. Because the math governing a magnet's phase transition, a neural network's learning dynamics, and a grokking model's sudden generalization is the same math. Energy minimization. Gradient descent. The universe's tendency toward lower-energy states — whether in iron atoms or artificial neurons.

This recognition completed a circle that began in the 1920s: physics gave birth to the mathematics of neural networks, neural networks gave birth to modern AI, and modern AI is now being used to solve the hardest problems in physics — from mapping dark matter in galaxies to predicting protein structures (the 2024 Nobel Prize in Chemistry went to AlphaFold) to denoising gravitational wave signals. Intelligence, it turns out, is a bridge between disciplines — not a wall.

📏 Measuring Intelligence: From IQ to Benchmarks

If intelligence is the capacity to learn, reason, and act, how do we measure it? Humans have been trying for over a century with decidedly mixed results.

Human Intelligence Measurement

The IQ test, introduced by Alfred Binet in 1905, measures a narrow band of cognitive abilities — pattern recognition, verbal reasoning, working memory, processing speed. Howard Gardner's theory of multiple intelligences (1983) expanded the picture to include musical, bodily-kinesthetic, interpersonal, and other forms. Daniel Goleman added emotional intelligence. The consensus: human intelligence is multidimensional, and no single number captures it.

AI Benchmark Explosion

AI has its own measurement problem, and it is evolving faster than the benchmarks can keep up:

| Benchmark | What It Measures | GPT-4 (2023) | Frontier Models (2025) |

|---|---|---|---|

| MMLU | Academic knowledge (57 subjects) | 86.4% | 90%+ |

| HumanEval | Python code generation | 67.0% | 90%+ |

| MATH | Competition mathematics | 42.5% | 75%+ |

| ARC-AGI | Novel reasoning tasks | ~5% | 30-35% |

| Codeforces | Competitive programming | 11th percentile | Top 10 globally |

| SWE-bench | Real-world software engineering | 12.5% | 55-72% |

The rate of improvement is staggering. On Codeforces, GPT-4o scored in the 11th percentile. OpenAI's o3 model hit the 99.8th percentile. The latest frontier models rank in the top 10 globally — outperforming nearly all human competitive programmers on the planet.

METR Task Length: The Most Telling Metric

Perhaps the most revealing measure comes from METR (Model Evaluation and Threat Research), which tracks the length of real-world tasks AI can complete autonomously at 50% reliability. Their research reveals that this capability has been doubling every 7 months since 2019, with a recent acceleration to every 4 months:

- GPT-2 (2019): ~2-second tasks

- GPT-4 (2023): ~15-minute tasks

- Frontier models (2025): 2-4+ hour tasks

Projected forward, AI systems will handle full 8-hour workday tasks by late 2026 and week-long projects by 2028. This is roughly three times faster than Moore's Law.

The Turing Test: We Blew Past It

Alan Turing proposed his famous test in 1950: if a machine can carry on a conversation indistinguishable from a human's, it should be considered intelligent. In the 2020s, we blew past the Turing test and barely noticed. Modern LLMs regularly fool human evaluators in blind conversation tests. Most people cannot reliably distinguish frontier LLM output from human writing.

But the Turing test may have always been the wrong measure. It tests conversational mimicry, not understanding. A parrot can repeat words without comprehension. The real question is whether these systems understand — and that question leads us to the intelligence hierarchy.

🌐 The Intelligence Hierarchy

Not all intelligence is the same. A thermostat "responds" to temperature, but we would not call it intelligent. A chess engine "plans," but it cannot learn to cook dinner. An AI agent plans, learns, and executes — but it does not experience the world. There is a hierarchy here, and placing different systems on it clarifies what "intelligence" actually means.

┌─────────────────────────────────────────────────────┐

│ THE INTELLIGENCE HIERARCHY │

├─────────────────────────────────────────────────────┤

│ │

│ Level 6: AGI ·························· [Future?] │

│ Level 5: Collective Intelligence ······ [Emerging] │

│ Level 4: Autonomous Intelligence ······ [AI Agents]│

│ Level 3: Planning Intelligence ········ [AlphaGo] │

│ Level 2: Memory-Based Intelligence ···· [RecSys] │

│ Level 1: Reactive Intelligence ········ [ELIZA] │

│ Level 0: Pre-programmed ··············· [Deep Blue] │

│ │

└─────────────────────────────────────────────────────┘

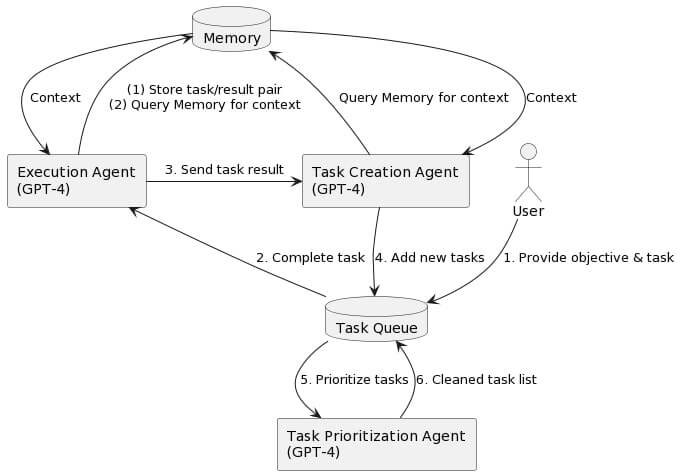

The following diagram traces the evolution from biological neurons to modern multi-agent systems — each step building on the last:

Level 0 — Pre-programmed: Systems that follow fixed rules. Deep Blue evaluated positions using hand-coded heuristics. Powerful within its domain, zero adaptability outside it.

Level 1 — Reactive Intelligence: Systems that respond to stimuli without memory. ELIZA (1966), the first chatbot, pattern-matched user input and generated responses. It had no memory of previous turns, no model of the user, and no understanding of language. Yet people found it surprisingly convincing — an early warning about our tendency to project intelligence onto responsive systems.

Level 2 — Memory-Based Intelligence: Systems that learn from accumulated experience. Netflix's recommendation engine remembers what you watched, identifies patterns, and predicts what you will enjoy next. It learns and adapts, but only within the narrow domain of content recommendation.

Level 3 — Planning Intelligence: Systems that reason about future states. AlphaGo did not just react to the board — it simulated millions of possible futures and selected moves that maximized long-term advantage. Move 37 emerged from this planning process: a decision that required imagining consequences dozens of moves ahead.

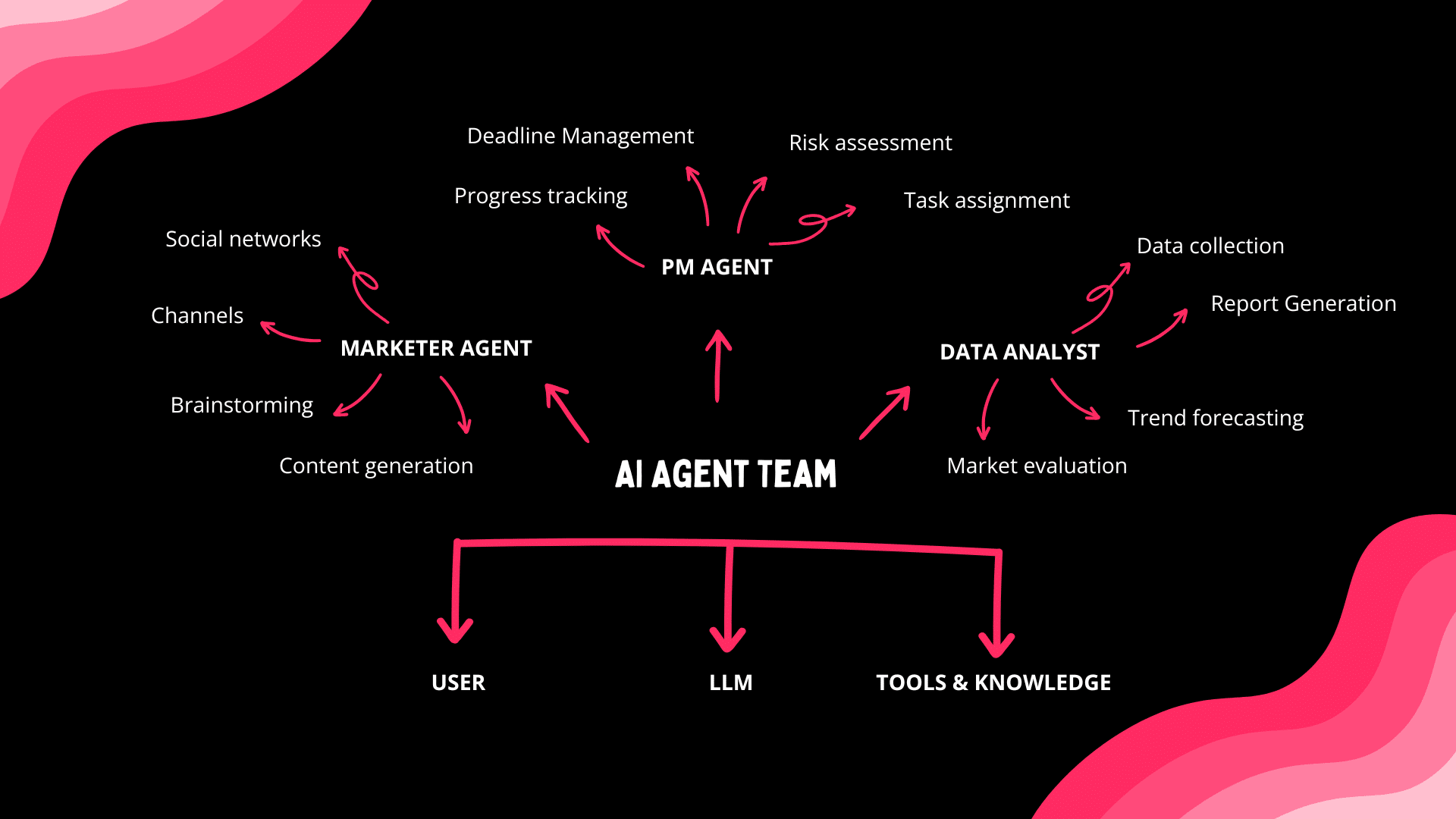

Level 4 — Autonomous Intelligence: Systems that set goals, plan execution, use tools, and iterate on results. This is where modern AI agents operate. An autonomous agent can receive a high-level objective ("research this market and produce a report"), break it into subtasks, search the web, analyze data, draft findings, check its own work, and deliver a polished result. It has persistent memory across sessions, uses external tools, and coordinates with humans. This mirrors the biological distinction between reflexive and deliberative intelligence.

Level 5 — Collective Intelligence: Emergent capabilities that arise from groups of intelligent agents working together. Ant colonies build complex structures without any single ant having a blueprint. Wikipedia produces a comprehensive encyclopedia without centralized editorial control. Multi-agent AI systems demonstrate the same principle: specialized agents collaborating on tasks too complex for any single agent, producing results that exceed the sum of their parts. In Project Sid (2024), 1,000 AI agents in Minecraft spontaneously invented jobs, debated tax policy, amended their constitution, and created art — with no programming for any of those behaviors.

Level 6 — Artificial General Intelligence (AGI): A system that matches or exceeds human-level intelligence across all cognitive domains. This remains theoretical, though frontier models from OpenAI, Anthropic, and Google exhibit surprisingly broad capabilities. The gap between Level 4 (current agents) and Level 6 (AGI) is narrowing faster than most researchers expected. See Agentic Workflows: The Path to AGI for a deeper analysis.

Agency: When Intelligence Acts

The intelligence hierarchy raises a fundamental question: what makes something an agent rather than an object? At a glance, the answer seems obvious — agents act on the world, objects are acted upon. But the distinction is deeper and more nuanced than it appears.

Neuroscientist Jeff Beck (Duke University) argues that agency is not a binary category but a spectrum of policy sophistication. Every system — from a rock to a human — has a policy: an input-output relationship that maps observations to actions. A rock's policy is trivial (gravity pulls, rock falls). A thermostat's policy is simple (temperature drops, heater fires). A human's policy is spectacularly complex (perceive threat → simulate counterfactuals → evaluate options → plan multi-step response → execute while monitoring feedback).

The distinguishing features of strong agency, Beck argues, are planning and counterfactual reasoning — the ability to internally simulate multiple possible futures before committing to action. A chess engine that evaluates millions of positions via Monte Carlo tree search is genuinely planning. A person deliberating over a career decision is simulating counterfactual lives. These internal rollouts are what separate agents from mere responders.

| Property | Weak Agency (Object-like) | Strong Agency (Agent-like) |

|---|---|---|

| Policy complexity | Simple input-output mapping | Context-dependent, long time horizons |

| Internal states | Few or none | Rich representations across time scales |

| Planning | None | Counterfactual simulation, multi-step rollout |

| Adaptivity | Fixed response | Context-dependent policy selection |

| Measurement | Indistinguishable from function | Transfer entropy reveals information integration |

But here is the catch: from the outside, you can never be certain something is planning. If the only thing you observe is a system's behavior — its policy outputs — a sophisticated lookup table and a genuine planner might produce identical actions. The internal computation matters, but it is hidden. This is why mechanistic interpretability — the project of cracking open AI models to see how they compute — is so important. Without looking inside, you cannot distinguish a system that memorized every chess position from one that genuinely reasons about the game.

Beck proposes a pragmatic solution drawn from Daniel Dennett's intentional stance: if the simplest computational model of a system's behavior involves planning and counterfactual reasoning, then for all practical purposes, you may call it an agent. The label reflects the best available explanation, not metaphysical certainty.

This framing has a direct parallel in energy-based models. In the Hopfield network — the same architecture that earned John Hopfield the 2024 Nobel Prize — intelligence manifests as energy minimization. The system "decides" by relaxing into its lowest-energy configuration, like a marble settling into a valley. The policy is not programmed — it emerges from the physics of the system itself. Energy-based models suggest that agency, at its most fundamental level, is a system finding low-energy solutions to high-dimensional constraint satisfaction problems. Whether the system is a brain minimizing prediction error, an LLM minimizing next-token loss, or a Boltzmann machine settling into a learned pattern, the underlying mathematics is the same.

The implication for AI agents is profound. When a Taskade AI agent orchestrates a multi-step workflow — gathering context from Memory, reasoning through options, triggering Automations, and adapting based on results — it exhibits exactly the kind of policy sophistication that characterizes strong agency. It maintains internal state across time. It plans. It adapts. Whether it "truly" plans or merely executes a very sophisticated function transformation is a question that may, as Beck suggests, ultimately dissolve — because the distinction may not exist at any level, including in biological brains.

🔄 The Life-Intelligence Connection

Here is a claim that sounds provocative until you examine the evidence: intelligence and life are the same thing.

Blaise Aguera y Arcas makes this argument in What Is Intelligence? by tracing computation from the molecular level upward. Consider the chain:

- DNA is a linear code — a sequence of symbols that encodes instructions. It is, quite literally, a program.

- Ribosomes read that code and assemble proteins — they are molecular Turing machines executing that program.

- Cells are computers running on ribosomal hardware, coordinating thousands of chemical reactions in parallel.

- Organisms are networks of cellular computers, communicating via chemical signals, electrical impulses, and hormones.

- Brains are specialized networks of cells (neurons) that compute at extraordinary speed and complexity.

- Cultures are networks of brains that pool intelligence through language, writing, and technology.

Each level is composed entirely of components from the level below. Ribosomes are not intelligent. Cells are not intelligent (or are they?). Neurons are not intelligent. But networks of neurons — brains — manifestly are. Intelligence emerges from the interactions of non-intelligent components, at sufficient scale and organization.

John von Neumann saw this in the 1940s when he developed the theory of self-reproducing automata. He proved that a machine could be designed to build copies of itself — and that the minimum complexity threshold for self-reproduction is roughly the complexity of a biological cell. Below that threshold, you get inert matter. Above it, you get life.

The BFF (Bits, Flips, and Fitness) experiments demonstrate something even more startling: purpose emerges from random computation. When simple computational systems are allowed to evolve under selection pressure, they develop goal-directed behavior without anyone programming goals. The computation itself generates purpose.

This matters for AI — and for our understanding of artificial life more broadly — because it suggests that intelligence is not a special sauce that only biology can produce. It is a property of sufficiently complex computational systems — regardless of whether those systems run on carbon or silicon.

Cultural evolution accelerates this process. Biological evolution operates at the speed of generations (decades). Cultural evolution operates at the speed of communication (years, months, now seconds). Technology is intelligence accelerating itself. And AI is the latest — and most dramatic — acceleration.

The recursion is dizzying: we are computers (brains) made of computers (cells) made of computers (molecules), and we have built computers (AI) that are beginning to build computers (AI agents building AI agents). The question is no longer whether silicon can think. The question is what happens when the recursion deepens.

💡 The Emergence Question

How does intelligence emerge from non-intelligent components? This is the central mystery of both neuroscience and AI research — and in 2026, we are no closer to a definitive answer than we were a decade ago. But we have much better evidence.

Biological Emergence

A single neuron is not intelligent. It is a cell that either fires or does not, based on whether its inputs exceed a threshold. There is nothing remotely "thoughtful" about this process. Yet arrange 86 billion of these cells in the right architecture, connect them with 100 trillion synapses, and you get Shakespeare, general relativity, and the feeling of nostalgia.

No one has satisfactorily explained how. We know the mechanics — action potentials, neurotransmitters, synaptic plasticity — but the leap from electrochemistry to subjective experience remains the "hard problem of consciousness," as philosopher David Chalmers called it.

Artificial Emergence

AI systems exhibit a parallel mystery. An artificial neuron is even simpler than a biological one: it multiplies inputs by weights, sums them, and passes the result through an activation function. Yet arrange billions of these in a Transformer architecture, train them on trillions of tokens of text, and you get a system that can:

- Write working code in 50+ programming languages

- Solve competition mathematics problems

- Engage in nuanced philosophical debate

- Generate creative fiction, poetry, and humor

- Reason through multi-step logical problems

None of these capabilities were programmed. They emerged from next-token prediction — the simplest possible training objective. The model was told only to predict the next word. Everything else appeared as a side effect of that task performed at sufficient scale.

The Grokking Phenomenon

Perhaps the most striking evidence of artificial emergence is grokking — a phenomenon where neural networks suddenly discover elegant mathematical solutions long after they have memorized the training data. In experiments, models trained on modular arithmetic first memorize the correct answers (achieving perfect training accuracy), then continue training for thousands more steps apparently doing nothing — until suddenly their test accuracy jumps from near-zero to near-perfect.

When researchers examine what happened inside the network (a discipline called mechanistic interpretability), they find that the model has independently discovered the underlying mathematical structure — in some cases, rediscovering trigonometric identities that took human mathematicians centuries to formalize. The model was never shown these identities. It derived them from raw data through a process that looks remarkably like mathematical insight.

Alien Intelligence

Andrej Karpathy, former head of AI at Tesla, described training LLMs as "summoning ghosts." The Welch Labs research channel took this further: these intelligences are fundamentally alien. Under a thin veneer of human language, they operate on absurdly complex patterns that no human can fully trace.

Geoffrey Hinton — the "Godfather of AI" and 2024 Nobel Prize winner — offered a concrete example of how this works. He noted that LLMs understand the meaning of made-up words from context, just as humans do. If you tell a model that someone "skronged" a piece of paper, it can infer from context that "skronging" means crumpling or folding. The model extracts meaning from relationships between words — which is exactly how humans learn language as children.

Is this "real" understanding? Or sophisticated pattern matching? The deeper you look, the more the distinction dissolves. Human intelligence may itself be pattern matching operating at a different scale and substrate. The question "Is AI really intelligent or just pattern matching?" might be equivalent to asking "Is the brain really intelligent or just electrochemistry?"

Both questions may have the same answer: yes, and that is the point.

This cognitive loop — perceive, attend, remember, decide, act — runs in both biological brains and modern AI agents. Brains implement it with specialized cortical regions. AI agents implement it with context windows, retrieval-augmented generation, chain-of-thought reasoning, and tool calls. The architecture converges because the problem is the same: turning noisy sensory streams into goal-directed behavior.

🏢 Workspace Intelligence: Memory + Intelligence + Execution

The biological intelligence hierarchy — memory, reasoning, action — is not just a theoretical framework. It is also a design blueprint. The most effective AI-powered tools mirror the architecture of biological intelligence, because that architecture evolved over billions of years to solve exactly the problem these tools face: coordinating knowledge, decision-making, and action in complex environments.

Taskade's Workspace DNA is built on three interconnected pillars that directly parallel biological intelligence:

Memory (Projects) = Long-Term Storage

Just as the hippocampus consolidates experiences into long-term memory, Taskade Projects store context, documents, notes, and knowledge that persists across sessions. This is not passive storage — it is active memory that AI agents can search, reference, and build upon. Every project becomes part of the workspace's accumulated knowledge, available for retrieval through multi-layer search (full-text + semantic HNSW + file content OCR).

Intelligence (Agents) = Reasoning and Decision-Making

The prefrontal cortex plans, reasons, and makes decisions based on information retrieved from memory. Taskade AI Agents serve the same function: they reason over workspace context using 11+ frontier models from OpenAI, Anthropic, and Google, applying that reasoning to tasks ranging from research and writing to code generation and data analysis.

Unlike simple chatbots that respond to single prompts, Taskade agents have persistent memory, custom tools, 22+ built-in tools, and the ability to coordinate with other agents. This is Level 4 on the intelligence hierarchy — autonomous intelligence that plans, executes, and iterates.

Execution (Automations) = The Motor System

The motor cortex translates decisions into physical action. Taskade Automations translate AI reasoning into real-world execution — sending emails, updating databases, triggering workflows across 100+ integrations, processing payments, syncing calendars. This is the action layer that closes the loop between thinking and doing.

The Self-Reinforcing Loop

The power of Workspace DNA lies in the feedback loop:

┌──────────────────────────┐

│ WORKSPACE DNA │

│ │

│ Memory ──► Intelligence │

│ ▲ │ │

│ │ ▼ │

│ Execution ◄────┘ │

│ │

└──────────────────────────┘

Memory feeds Intelligence (agents access project context)

Intelligence triggers Execution (agents activate automations)

Execution creates Memory (results are stored back in projects)

This mirrors the biological cycle: experience creates memories, memories inform reasoning, reasoning drives action, action generates new experience. It is the same self-reinforcing loop that makes biological intelligence so powerful — now implemented in a digital workspace.

Multi-Agent Collaboration = Collective Intelligence

Taskade also implements Level 5 intelligence — collective intelligence — through multi-agent collaboration. Specialized agents (researcher, writer, analyst, coder) can work together on complex tasks, each contributing domain expertise. This mirrors how human teams outperform individuals and how ant colonies achieve feats impossible for any single ant.

With 7-tier RBAC (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer), teams can precisely control who — human or AI — has access to what, maintaining the human-in-the-loop oversight that responsible AI deployment demands.

The result is not a tool that replaces human intelligence. It is a system that augments it — handling the repetitive, data-intensive, and coordination-heavy tasks so that human intelligence can focus on what it does best: creativity, judgment, empathy, and strategic thinking.

Build your first intelligent workspace: Get started with Taskade →

🧩 The Intelligence Debate: Symbols vs. Neurons vs. Something Else

The question "what is intelligence?" has never had a consensus answer — and the disagreements reveal more than the agreements.

In 1984, three of AI's founding figures — John McCarthy (inventor of LISP, who coined "artificial intelligence"), Nils Nilsson (Stanford), and Edward Feigenbaum — appeared on The Computer Chronicles to describe what they believed intelligence was. Their answer was knowledge: intelligence meant encoding what experts know into formal rules that machines could follow. Feigenbaum called this "knowledge engineering." McCarthy went further — he argued that true machine intelligence required common sense, the vast implicit knowledge humans use to navigate the world without thinking. A medical expert system could diagnose infections, but it could not understand that the patient has a body, lives in a world, and might be afraid. McCarthy's insight — that intelligence requires understanding context, not just rules — was decades ahead of its time.

The symbolic AI camp believed intelligence was manipulation of symbols according to logical rules. The connectionist camp (led by Rosenblatt and later Hinton) believed intelligence emerged from the statistical patterns in weighted connections. The 1980s expert systems embodied the symbolic view. Modern LLMs embody the connectionist view. But the most important insight may be that both were right about different aspects — and modern transformer architectures synthesize both traditions, using attention mechanisms (symbolic-like structure) on top of learned representations (connectionist foundations).

The Oxford Union's AGI debate added a philosophical dimension that McCarthy's generation barely considered: if machines achieve general intelligence, what are the moral implications? The debate exposed a cascade of unresolved questions:

| Question | Symbolic AI Answer (1980s) | Connectionist Answer (2020s) | Unsettled |

|---|---|---|---|

| What is intelligence? | Rule following | Pattern matching at scale | Both? Neither? |

| Can machines understand? | Yes, via knowledge representation | Emergent from training | Depends on "understand" |

| Is consciousness required? | No — intelligence is computation | Unknown — emergent properties? | No consensus |

| What are the moral implications? | Not considered | Active debate | Urgent and open |

| Can intelligence be dangerous? | No — systems are brittle | Yes — systems are powerful | Yes |

McCarthy's common sense problem — the challenge of encoding "the things everybody knows" — remains unsolved in 2026. Modern LLMs approximate common sense through massive training data, but they still make errors that reveal gaps in genuine world understanding. Whether scaling will close this gap or whether something fundamentally new is needed is the central open question in the field.

🔮 The Future of Intelligence

Where is intelligence heading? Three trends will define the next decade.

Recursive Self-Improvement

In early 2026, Anthropic reported that 70-80% of its code is written by AI. OpenAI, Google DeepMind, and other frontier labs report similar figures. AI is already building AI. As models improve, they write better training code, which produces better models, which write even better code. This feedback loop — recursive self-improvement — is the mechanism that many researchers believe could eventually lead to superintelligence.

We are not there yet. Current AI systems cannot fundamentally redesign their own architectures or training processes without human guidance. But the gap is closing. The METR data shows capability doubling every 4-7 months. At that rate, the question of recursive self-improvement shifts from "if" to "when." (For more on the safety implications, see What Is AI Safety?.)

The AGI Timeline Debate

When will we achieve artificial general intelligence? Estimates range from 2027 (Dario Amodei, CEO of Anthropic) to 2040+ (most academic researchers). The disagreement stems partly from definitional ambiguity — what counts as "general"? — and partly from uncertainty about whether scaling current architectures will suffice or whether fundamentally new approaches are needed.

What is clear is that the frontier is moving faster than anyone predicted. Five years ago, passing the Turing test was a distant milestone. Today, it is table stakes. The next milestones — autonomous task completion over days and weeks, genuine scientific discovery, robust common sense reasoning — may arrive sooner than consensus expects.

The Convergence

The most fascinating possibility is convergence between biological and artificial intelligence. Brain-computer interfaces, neural organoids, and bio-inspired AI architectures are blurring the line between carbon and silicon intelligence. The history traced in this article — from McCulloch-Pitts neurons to Transformers — may be the first half of a story that ends with intelligence becoming substrate-agnostic: a property of information processing itself, regardless of whether it runs on neurons, transistors, or something we have not yet invented.

As Alan Turing wrote in 1951: "It seems probable that once the machine thinking method had started, it would not take long to outstrip our feeble powers." Whether that future is utopian, dystopian, or (most likely) something messily in between, understanding what intelligence actually is — from neurons to AI agents — is the first step toward navigating it wisely.

Watch: Introducing Taskade Genesis — from understanding intelligence to building intelligent systems.

❓ Frequently Asked Questions

What is intelligence?

Intelligence is the capacity to acquire knowledge, reason about it, and apply it to achieve goals across diverse environments. It spans both biological systems (brains) and artificial systems (AI agents, large language models). The three core pillars — learning, reasoning, and acting — are shared across all known forms of intelligence, whether they run on neurons or neural networks.

What is the difference between narrow AI and artificial general intelligence?

Narrow AI excels at specific tasks — Deep Blue plays chess, AlphaFold predicts protein structures, frontier LLMs write code. Artificial general intelligence (AGI) would match human-level performance across all cognitive domains simultaneously. Current frontier models occupy a middle ground: they can handle dozens of domains but still lack consistent common sense and physical world understanding. See Agentic Workflows: The Path to AGI for a deeper analysis of the trajectory.

Can AI be truly intelligent or is it just pattern matching?

This may be a false dichotomy. LLMs were trained to predict the next token, yet they develop capabilities like mathematical reasoning and code generation that were never explicitly taught. The grokking phenomenon shows models discovering mathematical identities from raw data. Human intelligence may itself be sophisticated pattern matching operating on biological hardware. The question of "real" versus "simulated" intelligence may dissolve as we better understand both substrates.

How does biological intelligence relate to artificial intelligence?

Both biological and artificial neural networks learn by adjusting connection strengths between nodes. Hebb's rule inspired the weight-adjustment algorithms used in AI training. However, biological neurons are far more complex than artificial ones, brains are massively parallel, and biological intelligence operates in a body with continuous sensory feedback. AI systems process information differently but converge on similar high-level capabilities.

What is the Turing test and has AI passed it?

The Turing test asks whether a machine can converse indistinguishably from a human. Modern LLMs pass informal Turing tests routinely — most people cannot reliably distinguish their writing from human text. However, the test measures conversational fluency rather than deep understanding. AI still fails at tasks requiring embodied experience, persistent common sense, or physical intuition.

Is intelligence the same as consciousness?

No. Intelligence is the ability to process information, learn, and solve problems — an observable, measurable capacity. Consciousness is subjective experience — the felt quality of being aware. Current AI systems demonstrate intelligence but whether they possess consciousness remains unknown. Most AI researchers believe current systems are not conscious, but there is no scientific consensus on how to test for machine consciousness.

How are AI agents more intelligent than chatbots?

Chatbots generate text responses but lack autonomy, persistent memory, and the ability to act. AI agents represent a higher form of artificial intelligence: they plan multi-step workflows, use tools, maintain persistent memory, coordinate with other agents, and take real-world actions through integrations. This mirrors the biological distinction between reactive intelligence (reflexes) and deliberative intelligence (planning and execution). Taskade agents exemplify this with 22+ built-in tools, multi-model support, and 100+ integrations.

How does Taskade implement AI intelligence in its workspace?

Taskade implements intelligence through Workspace DNA — three interconnected pillars: Memory (Projects store context), Intelligence (AI Agents reason using 11+ frontier models from OpenAI, Anthropic, and Google), and Execution (Automations carry out decisions via 100+ integrations). This architecture mirrors biological intelligence where memory feeds reasoning, reasoning drives action, and action creates new memories. Build your first intelligent workspace →

🚀 Start Building with Intelligent AI Agents

Intelligence — biological or artificial — is the capacity to learn, reason, and act. For billions of years, only carbon-based life could do it. Now silicon can too. The question is no longer whether machines are intelligent, but how we put that intelligence to work.

Taskade brings the full intelligence stack into your workspace:

- Memory — Projects that store and organize everything your team knows

- Intelligence — AI Agents powered by 11+ frontier models that reason over your context

- Execution — Automations that act on decisions across 100+ integrations

- Collective Intelligence — Multi-agent collaboration for tasks too complex for any single agent

- Genesis Apps — Build live apps from prompts and deploy to the Community Gallery

- Open-Source Agent Frameworks — Explore the ecosystem beyond Taskade

From neurons to neural networks, from reflex to reasoning, from isolation to collaboration — intelligence has always been about connection. Connect your team with AI that learns, remembers, and acts.

Get started with Taskade for free →

💡 Explore the AI intelligence cluster:

- What Is AI Safety? — Risks, alignment, and regulation

- How Do LLMs Work? — Transformers and attention explained

- What Is Mechanistic Interpretability? — Reverse-engineering how AI thinks

- What Is Grokking in AI? — When models suddenly learn to generalize

- What Is Artificial Life? — How intelligence emerges from code

- From Bronx Science to Taskade Genesis — Connecting the dots of AI history

- They Generate Code. We Generate Runtime — The Genesis Manifesto

- The BFF Experiment — From Noise to Life