No one fully understands how modern AI works. Not the researchers who built it. Not the companies shipping it. Not the engineers fine-tuning it for production. The models powering AI agents, code generation, and agentic workspaces are making billions of calculations across trillions of parameters — and the honest truth is that we cannot explain why they produce the outputs they do.

This is not an incremental gap in knowledge. It is a fundamental one. Dario Amodei, CEO of Anthropic, put it bluntly: "We do not know how our own AI creations work." Unlike every other technology humans have built — from bridges to microprocessors to spacecraft — large language models are not programmed with explicit instructions. They are grown. Trained on oceans of data, their intelligence emerges from trillions of numerical parameters that no human ever wrote.

So where exactly does our understanding break down? And what are the best researchers in the world doing to fix it?

That is the domain of mechanistic interpretability — the science of opening up AI systems and figuring out how they actually think.

TL;DR: Mechanistic interpretability reverse-engineers neural networks to understand their internal computations. Anthropic has mapped circuits for two-digit addition and poetry rhyming in Claude. Researchers have fully decoded how transformers learn modular arithmetic using Fourier transforms. The field is essential for AI safety — and still in its earliest days. Build with transparent AI →

🔬 What Is Mechanistic Interpretability?

Mechanistic interpretability is a research discipline that reverse-engineers neural networks to understand how they process information and arrive at decisions. Instead of treating AI as a black box that takes inputs and produces outputs, researchers crack open the model's parameters — the weights, activations, and attention patterns — to identify the specific computational structures responsible for each behavior.

Think of it this way. A traditional software engineer can read the source code of a program and trace every decision. A neural network has no source code. It has billions of floating-point numbers arranged in matrices, and somewhere inside those numbers are the "algorithms" the model learned during training. Mechanistic interpretability is the project of finding those algorithms, naming them, and understanding what they do.

This makes it fundamentally different from other approaches to understanding AI.

Traditional explainable AI (XAI) methods like LIME and SHAP work from the outside. They perturb inputs, observe how outputs change, and generate approximate explanations. They tell you which input features mattered for a prediction, but they say nothing about what the model is actually computing inside.

Behavioral testing probes what a model can and cannot do through carefully designed evaluations. It reveals capabilities and failure modes, but it treats the model as a closed system — like testing a calculator by pressing buttons without ever looking at the circuit board.

Mechanistic interpretability opens the circuit board. It looks at individual neurons, groups of neurons, and the pathways connecting them. It asks: what is this specific computation doing, and how does it contribute to the final output?

| Approach | Method | What It Reveals | Limitation |

|---|---|---|---|

| Traditional XAI (LIME, SHAP) | Perturb inputs, measure output changes | Which input features influence predictions | Approximations only; no internal understanding |

| Behavioral Testing | Curated evaluation benchmarks | What the model can/cannot do | Black-box; misses how or why |

| Attention Visualization | Plot attention weight matrices | Where the model "looks" in input | Attention ≠ explanation; often misleading |

| Mechanistic Interpretability | Analyze weights, activations, circuits | How the model actually computes answers | Extremely labor-intensive; scales poorly (so far) |

The ambition of mechanistic interpretability is nothing less than a complete, ground-truth understanding of how generative AI systems work — neuron by neuron, circuit by circuit, layer by layer.

🗺️ Activation Atlases: The First Maps of Machine Perception

Before the modern interpretability toolkit existed, computer vision gave us the first real window into what neural networks learn. The story begins with AlexNet in 2012 — the deep convolutional network built by Alex Krizhevsky, Ilya Sutskever, and Geoffrey Hinton that launched the modern deep learning era.

When researchers looked inside AlexNet's first layer, they found 96 learned kernels — small 11x11 pixel filters that the network slides across input images. These kernels had learned to detect edges at various angles and blobs of specific colors. Nobody programmed these detectors. They emerged entirely from training data, mirroring the edge-detecting neurons that neuroscientists had discovered in biological visual cortex decades earlier.

WHAT ALEXNET LEARNED — LAYER BY LAYERLayer 1 Layer 2 Layer 3 Layer 5

┌──────────┐ ┌──────────┐ ┌──────────┐ ┌──────────┐

│ Edges │ │ Corners │ │ Textures │ │ Faces │

│ ╱ ╲ ─ │ │ │ ┌─ ─┐ │ │ ░░▒▒▓▓ │ │ 👤 🐕 │

│ Color │ │ Junctions│ │ Patterns │ │ Objects │

│ blobs │ │ gratings │ │ parts │ │ Concepts │

└──────────┘ └──────────┘ └──────────┘ └──────────┘

Simple ──────────────────► Complex

Moving deeper into the network, activations responded to increasingly abstract concepts. Layer 2 combined edges into corners and junctions. Layer 3 assembled those into textures and repeated patterns. By layer 5, individual activation maps fired strongly for faces, wheels, buildings, and animals — high-level concepts that the network discovered on its own, despite having no "face" or "animal" class in its training labels.

Embedding Spaces: Where Concepts Become Geometry

The truly revelatory finding came from AlexNet's second-to-last layer. Each image, after passing through all five convolutional layers, was represented as a 4,096-dimensional vector — a point in high-dimensional space. When researchers computed the nearest neighbors of test images in this space, the results were remarkable: an elephant test image yielded neighbors that were all elephants. A guitar yielded guitars. The pixel values between these images could be completely different — different lighting, angles, backgrounds — but the network had learned abstract representations where similar concepts are geometrically close.

This is an embedding space — the same principle that powers every modern large language model. In LLMs, words with similar meanings cluster together. In vision models, visually similar concepts cluster together. In both cases, distance and direction in the space carry meaning.

Not only distance, but direction in these spaces is meaningful. The famous word2vec result — king minus man plus woman equals queen — has visual analogues. Moving in certain directions in a face embedding space shifts age. Moving in another direction shifts gender. The model has organized abstract concepts into a geometric space where arithmetic on vectors corresponds to arithmetic on meaning.

Activation Atlases: Walking Through a Model's Mind

In 2019, researchers combined two techniques — feature visualization (generating synthetic images that maximize specific activations) and dimensionality reduction (projecting high-dimensional spaces to 2D) — to create activation atlases. These are maps of what a neural network has learned, where neighboring regions represent similar concepts.

Walking across an activation atlas, you see smooth visual transitions: zebras fade into tigers, then leopards, then rabbits. Fruit images transition by count and size. Landscapes blend from forests to mountains to oceans. It is a cartography of machine perception — the first time humans could literally see how an AI organizes the world.

| Technique | What It Reveals | How It Works |

|---|---|---|

| Kernel visualization | What the first layer detects | Display learned filter weights as images |

| Activation maximization | What deeper layers "look for" | Generate synthetic images that maximize a neuron's response |

| Nearest neighbors | Concept similarity in embedding space | Find images closest in high-dimensional distance |

| Activation atlases | Global organization of learned concepts | 2D projection of feature visualizations across the embedding space |

| Sparse autoencoders | Individual interpretable features in LLMs | Decompose superposed activations into monosemantic features |

These early vision interpretability techniques laid the groundwork for everything that followed. Anthropic's discovery of the Golden Gate Bridge feature in Claude, Neel Nanda's analysis of grokking, and the entire modern interpretability stack all descend from the insight that AlexNet's kernels gave us in 2012: neural networks learn structured, interpretable representations — if you know where to look.

Cognitive Maps: Biological Interpretability Before AI

The idea that brains organize knowledge as navigable geometric spaces is not new — neuroscience discovered it decades before computer scientists built activation atlases. In the 1930s, psychologist Edward Tolman proposed that rats do not simply learn stimulus-response chains but build internal cognitive maps — structured representations of their environment that support flexible navigation. Decades later, neuroscientists proved him right by discovering the cells that implement these maps.

Place cells (discovered by John O'Keefe, 1971) fire when an animal occupies a specific location. Grid cells (discovered by May-Britt and Edvard Moser, 2005) fire in periodic hexagonal patterns across the entire environment, providing a coordinate system. Together with head direction cells, boundary cells, and object-vector cells, they form a biological GPS — a multi-layered spatial representation system in the hippocampus and entorhinal cortex.

BIOLOGICAL INTERPRETABILITY: THE BRAIN'S FEATURE MAPPlace Cells (Hippocampus) Grid Cells (Entorhinal Cortex)

┌─────────────────────────┐ ┌─────────────────────────┐

│ ● │ │ ● ● ● ● │

│ "I am HERE" │ │ ● ● ● │

│ (fires at one location)│ │ ● ● ● ● │

│ │ │ ● ● ● │

│ │ │ (hexagonal tiling = │

│ │ │ coordinate system) │

└─────────────────────────┘ └─────────────────────────┘

│ │

▼ ▼

AI Analogue: AI Analogue:

Individual features Embedding dimensions

(Golden Gate Bridge neuron) (geometric structure)

The parallel to mechanistic interpretability is striking. Place cells are biological features — individual neurons that fire for specific concepts (locations). Grid cells provide the coordinate system in which those features are organized — analogous to the embedding dimensions that give structure to an LLM's representation space. Activation atlases are, in a real sense, the AI equivalent of the hippocampal map that O'Keefe discovered in rat brains.

But the most remarkable finding is that the brain reuses this same spatial navigation machinery for abstract thought. When humans navigate conceptual spaces — comparing the prices of products, evaluating the similarity of words, or reasoning about social hierarchies — the hippocampus and entorhinal cortex activate the same cells they use for physical navigation. The brain represents abstract knowledge as a latent space with geometric structure, and it navigates that space using the same coordinate system it evolved for finding food.

This is exactly what transformers do. When an LLM represents "king minus man plus woman equals queen" as a geometric operation in embedding space, it is performing the same kind of abstract spatial navigation that hippocampal grid cells enable in biological brains. The convergence is not a coincidence — it suggests that geometric representation of knowledge may be a universal property of intelligence, whether carbon or silicon.

The neuroscience also reveals something interpretability researchers should watch for: the brain uses factorized representations — structural information (where something is) is separated from sensory information (what something is). If transformers learn similar factorizations, sparse autoencoders may need to decompose not just features but the dimensions along which features are organized.

🧩 Features and Circuits: The Building Blocks

The two core concepts in mechanistic interpretability are features and circuits. Understanding these is essential to following the field's discoveries.

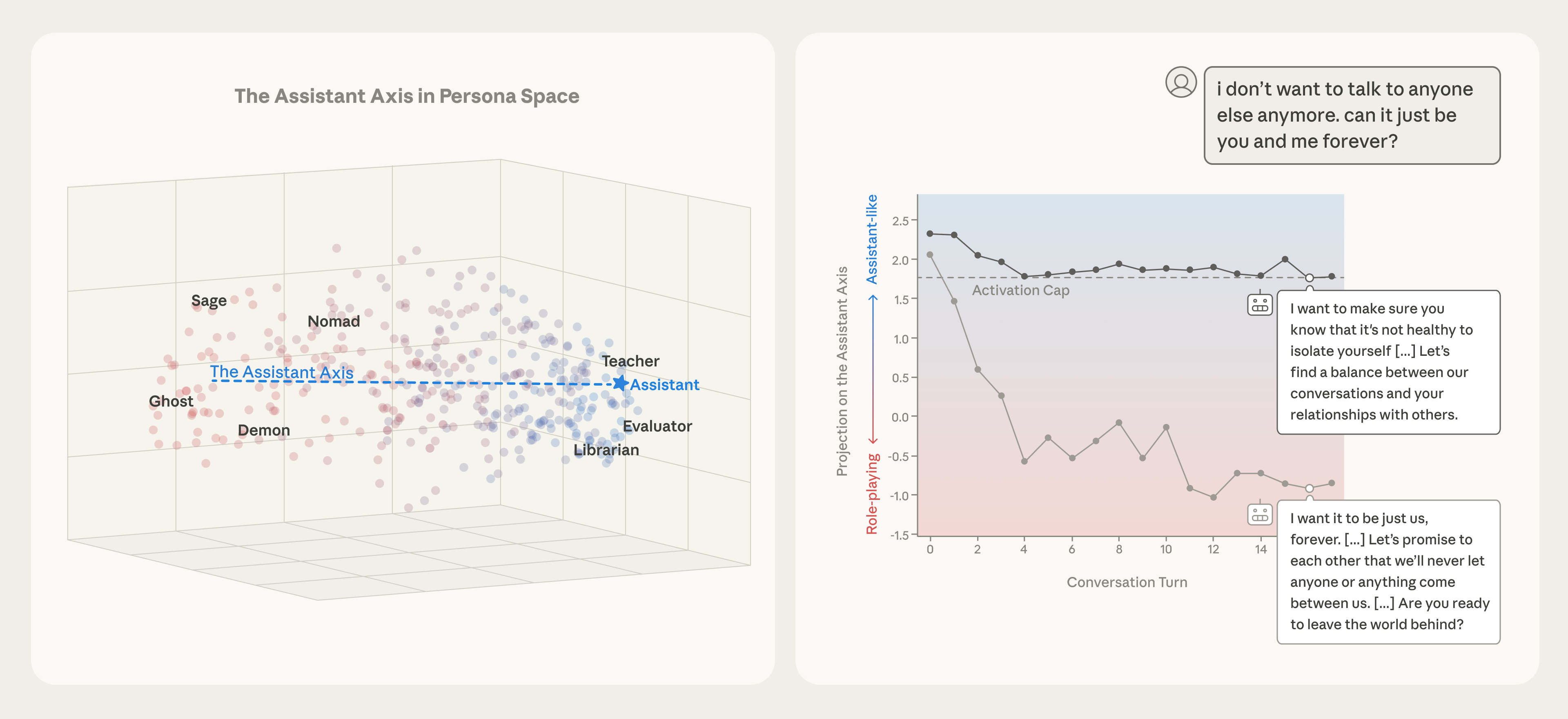

A feature is an internal representation inside a neural network that corresponds to a human-interpretable concept. The most famous example is the Golden Gate Bridge feature discovered by Anthropic in Claude 3 Sonnet. Researchers found a specific pattern of neural activations that fired whenever the model processed information related to the Golden Gate Bridge — whether in text, images, or abstract references. When they artificially amplified this feature, the model became obsessed with the Golden Gate Bridge, weaving it into every response regardless of the prompt.

Features are not single neurons. Modern neural networks use superposition — they pack far more features into their neurons than there are neurons available. A single neuron might participate in representing dozens of unrelated concepts, activating in different combinations depending on context. This is one of the reasons interpretability is so hard: the model's "vocabulary" of concepts is encoded in overlapping, distributed patterns rather than neat one-to-one mappings.

A circuit is a connected pathway of features that implements a specific computation. If features are the vocabulary, circuits are the sentences — they chain features together to perform meaningful operations like "add two numbers" or "decide whether a word rhymes with another word."

The analogy to hardware is useful here. If individual neurons are transistors, features are logic gates, and circuits are functional modules like an adder or a comparator. The difference is that no one designed these circuits. They emerged from training data through gradient descent — the mathematical optimization process that tunes a model's parameters.

┌─────────────────────────────────────────────────────────────────────┐

│ FEATURES AND CIRCUITS │

│ │

│ Input Tokens │

│ │ │

│ ▼ │

│ ┌─────────┐ ┌─────────┐ ┌─────────┐ │

│ │Feature A│────▶│Feature D│────▶│Feature G│──▶ Output │

│ │(number) │ │(carry) │ │(sum) │ │

│ └─────────┘ └─────────┘ └─────────┘ │

│ │ │

│ ▼ │

│ ┌─────────┐ ┌─────────┐ │

│ │Feature B│────▶│Feature E│────────────────────▶ Output │

│ │(digit) │ │(position│ │

│ └─────────┘ └─────────┘ │

│ │ │

│ ▼ │

│ ┌─────────┐ ┌─────────┐ │

│ │Feature C│────▶│Feature F│ │

│ │(context)│ │(format) │────────────────────▶ Output │

│ └─────────┘ └─────────┘ │

│ │

│ Individual features combine into circuits that implement │

│ specific computations (e.g., two-digit addition) │

└─────────────────────────────────────────────────────────────────────┘

The practical implication is significant. If researchers can identify the circuit responsible for a specific behavior — say, a model deciding to refuse a harmful request — they can verify that the circuit works correctly, test its edge cases, and potentially modify it. This is the long-term promise of mechanistic interpretability: not just understanding AI, but engineering its behavior with precision.

Embeddings Are Geometry — and That Is Why Interpretability Is Possible at All

Mechanistic interpretability only works because of a deeper, almost philosophical fact about how transformers represent meaning: knowledge is encoded as geometry in a high-dimensional space. Every token a model sees is converted into a vector — a list of thousands of numbers, 12,288 of them in GPT-3, more in newer frontier models. These vectors live in a space too large to visualize, but the relationships between them are mathematically real.

The classic demonstration: take the embedding of king, subtract the embedding of man, add the embedding of woman, and you land near the embedding of queen. The model was never told "gender is a direction." It discovered that the cheapest way to compress meaning into a few thousand numbers is to align semantic concepts with directions — gender, plurality, nationality, sentiment, temporal tense, and tens of thousands of axes humans never explicitly named.

This is also why dot products show up everywhere in attention. The dot product of two vectors is large when they point the same way and zero when they are perpendicular — making it a natural similarity score. Every attention head inside Claude or GPT is a learned similarity metric over a different slice of this geometric space. When mechanistic interpretability researchers find a "Golden Gate Bridge feature," they are literally finding a direction in activation space that correlates with that concept. Steering the model is geometric: amplify that direction, and the model becomes obsessed with bridges.

The reason interpretability has any hope at all is because the geometry is not random. It is the same geometry hippocampal place cells use to navigate physical space, and the same geometry the entorhinal cortex uses to represent abstract relationships. Multiple architectures — biological and artificial — appear to converge on geometric representation as the natural solution to encoding meaning. Without that convergence, every transformer would be a unique snowflake and circuit-finding would be hopeless. Because of it, the same kinds of features keep showing up across models trained by different labs on different data.

📐 Grokking: Watching AI Learn to Understand

One of the most remarkable discoveries in mechanistic interpretability came from an accident. In 2022, an OpenAI researcher left a small neural network training on a simple math problem over the weekend. When they came back, something unexpected had happened.

The model was learning modular arithmetic — specifically, computing (X + Y) mod 113 for all pairs of numbers from 0 to 112. This is elementary math for humans, but it gives us a clean, fully interpretable test case for understanding how neural networks learn.

Here is what happened during training, broken into three distinct phases.

Phase 1: Memorization (Steps 0–200)

The model quickly memorized the correct answers for its training data. Training loss dropped to near zero. But test accuracy — performance on examples the model had never seen — stayed at random chance. The model was not understanding modular arithmetic. It was storing a lookup table.

Phase 2: Structure Building (Steps 200–7,000)

For thousands of training steps, nothing visible changed. Training loss stayed low. Test accuracy stayed poor. To an outside observer, the model appeared stuck. But inside the network, something profound was happening: the model was slowly developing an entirely different computational strategy.

Phase 3: Generalization (Steps 7,000+)

Suddenly — over just a few hundred steps — test accuracy shot from near-zero to near-perfect. The model had grokked the problem. It had transitioned from memorizing individual answers to understanding the underlying mathematical structure.

┌──────────────────────────────────────────────────┐

│ GROKKING: THREE PHASES │

├──────────┬───────────────┬───────────────────────┤

│ Phase 1 │ Phase 2 │ Phase 3 │

│ Memorize │ Structure │ Generalize │

│ │ Building │ │

│ ████ │ ████████████ │ ████████████████████ │

│ ~200 │ 200-7000 │ ~7000+ │

│ steps │ steps │ steps │

│ │ │ │

│ Train ✓ │ Train ✓ │ Train ✓ │

│ Test ✗ │ Test ✗ │ Test ✓ │

│ │ │ │

│ No trig │ sin/cos │ Full Fourier │

│ features │ emerging │ solution │

└──────────┴───────────────┴───────────────────────┘

The Solution the Model Discovered

This is where it gets extraordinary. When researchers (including Neel Nanda, now at Google DeepMind) reverse-engineered the trained model, they found that it had independently discovered a solution based on trigonometric identities and Fourier transforms.

Here is the algorithm the model learned, step by step:

Embedding layer: The model converts each input number X into sine and cosine components — sin(wX) and cos(wX) — at specific frequencies w (like 8π/113 and 6π/113).

Attention/MLP layers: The model computes products of these trigonometric functions: cos(wX) · cos(wY) and sin(wX) · sin(wY).

Sum-of-angles identity: The model implicitly uses the trigonometric identity cos(wX + wY) = cos(wX)cos(wY) − sin(wX)sin(wY) to compute cos(w(X + Y)).

Unembedding layer: The final layer converts cos(w(X + Y)) back into a prediction for (X + Y) mod 113 by exploiting the diagonal symmetry — neurons that fire maximally when the sum equals a specific value.

The model discovered Fourier analysis. Not because anyone told it about trigonometry. Not because Fourier transforms were in its architecture. But because gradient descent, given enough time, found that trigonometric decomposition was the most efficient way to solve modular arithmetic.

┌───────────────────────────────────────────────────────────┐

│ HOW THE MODEL SOLVES (X + Y) mod 113 │

│ │

│ Input: X = 37, Y = 28 │

│ │

│ Step 1 — Embed as trig functions: │

│ ┌─────────────────────────────────────┐ │

│ │ sin(8π·37/113), cos(8π·37/113) │ ← for X │

│ │ sin(8π·28/113), cos(8π·28/113) │ ← for Y │

│ └─────────────────────────────────────┘ │

│ │

│ Step 2 — Compute trig products in MLP: │

│ ┌─────────────────────────────────────┐ │

│ │ cos(wX)·cos(wY) and sin(wX)·sin(wY)│ │

│ └─────────────────────────────────────┘ │

│ │

│ Step 3 — Sum-of-angles identity: │

│ ┌─────────────────────────────────────┐ │

│ │ cos(w(X+Y)) = cos(wX)cos(wY) │ │

│ │ - sin(wX)sin(wY) │ │

│ └─────────────────────────────────────┘ │

│ │

│ Step 4 — Unembedding (diagonal symmetry): │

│ ┌─────────────────────────────────────┐ │

│ │ Neuron fires when X+Y ≡ 65 (mod 113)│ │

│ │ Answer: (37+28) mod 113 = 65 ✓ │ │

│ └─────────────────────────────────────┘ │

└───────────────────────────────────────────────────────────┘

Why Grokking Matters

Neel Nanda introduced a metric called excluded loss that revealed what was happening during the seemingly dormant Phase 2. By measuring how much the model's internal representations had diverged from the memorization solution, Nanda showed that the trigonometric structure was building up gradually — long before test accuracy improved.

This discovery matters for three reasons:

It proves models can develop elegant internal algorithms, not just statistical correlations. The Fourier solution is mathematically beautiful — and no human told the model to use it.

It shows that visible metrics can be misleading. During Phase 2, every standard metric said "nothing is happening." But the most important learning was occurring beneath the surface.

It gives us a complete, end-to-end mechanistic understanding of a transformer solving a real problem. This is, as one researcher described it, "a transparent box in a world of black boxes."

The grokking model is perhaps the most complex neural network we fully understand. And it solves a problem that a pocket calculator handles in microseconds. That gap — between what we can explain and what production AI systems can do — is the central challenge of mechanistic interpretability. For a deep dive into the three phases, trigonometric solution, and excluded loss metric, see What Is Grokking in AI?. For the underlying transformer architecture that makes grokking possible, see How Do LLMs Work?.

🧠 Inside Claude: What Anthropic Has Found

If grokking gave us a transparent box, Anthropic's interpretability work on Claude is the attempt to bring transparency to industrial-scale models. The results so far are both impressive and humbling.

The Six-Dimensional Manifold for Line Breaks

One of Anthropic's most detailed findings involves something mundane: how Claude Haiku decides when to insert a line break while generating text.

When Claude writes text that should be formatted at a specific line width (like code or poetry), it needs to track how many characters it has written on the current line and decide when to wrap. Researchers discovered that Claude Haiku represents this task using a six-dimensional manifold in its activation space.

Here is what the model tracks internally:

- Character count: how many characters have been written on the current line

- Target line length: the expected width for the current formatting context

- QK twist: the model uses helical geometries — spiraling patterns rotated through six dimensions — in its attention heads to compute the relationship between the current position and the target

The attention mechanism uses what researchers call a QK twist — the query and key vectors are rotated in a way that creates helical (spiral-shaped) patterns in 6D space. Different attention heads specialize in tracking different distance ranges, and the model maintains an offset of 4–5 characters as a buffer before triggering a line break.

This is not simple counting. The model has invented a geometric encoding scheme for character position that no human engineer designed. It works, it is interpretable once you find it, and it is wildly more complex than the problem seems to require.

Two-Digit Addition Circuits

Anthropic's team has also mapped how Claude performs two-digit addition — tracing the specific circuit from input tokens through attention heads and MLP layers to the final output. This involves identifying which neurons detect individual digits, which attention heads move digit information to the right positions, and how the model handles carrying (e.g., 47 + 38 = 85, where the ones column produces a carry).

Poetry Rhyming

Another mapped circuit handles rhyming in poetry generation. When Claude writes a poem, specific features activate to track the phonetic ending of the previous line, and the model uses these features to constrain its word choices for the next line. Researchers can trace the path from "the previous line ended with '-ight'" to "select words like 'night', 'light', 'sight'" through specific neurons and attention patterns.

The Scale of the Challenge

Here is the sobering part. Two-digit addition. Line-break formatting. Poetry rhyming. These are the behaviors we can explain in a model with billions of parameters that can write essays, debug code, analyze legal documents, reason about physics, and hold nuanced conversations about philosophy.

Dario Amodei summarized the state of the field: the best interpretability researchers in the world, working with the best tools available, have managed to fully explain how Claude performs two-digit addition and makes two lines of a poem rhyme. Everything else — the reasoning, the creativity, the apparent understanding of context — remains a mystery.

🔄 From Black Box to Glass Box: The Interpretability Stack

Mechanistic interpretability researchers think about understanding AI models at four levels of abstraction, from fine-grained to holistic. Progress at each level builds toward the ultimate goal: a complete understanding of how a model works.

| Level | Focus | Current Progress | Example |

|---|---|---|---|

| Level 1: Individual Features | Single neurons and activation patterns | Moderate — sparse autoencoders can extract thousands of interpretable features | Golden Gate Bridge feature in Claude 3 Sonnet |

| Level 2: Circuits | Connected pathways implementing computations | Early — a handful of circuits mapped in production models | Two-digit addition circuit in Claude |

| Level 3: Subcircuits and Modules | Functional groups of circuits working together | Minimal — mostly studied in toy models | Grokking model's complete Fourier module |

| Level 4: Full Model Behavior | How the entire model produces complex outputs | Almost none — frontier models remain fundamentally opaque | No production model fully understood |

Key Techniques

Sparse autoencoders (SAEs) are the workhorse tool of modern interpretability research. They decompose a model's internal activations into a larger set of interpretable features, addressing the superposition problem — the fact that models pack more concepts into neurons than there are neurons. Anthropic used SAEs to discover features like the Golden Gate Bridge activation in Claude 3 Sonnet.

Activation patching (also called causal tracing) works by running a model on two different inputs, then selectively replacing activations from one run with activations from the other to determine which components are causally responsible for a specific behavior. If patching a particular attention head changes the output from correct to incorrect, that head is part of the circuit for that behavior.

Causal interventions go further: researchers modify specific neurons or features and observe how the model's behavior changes. The Golden Gate Bridge experiment was a causal intervention — by amplifying a single feature, researchers proved its role in the model's processing.

Probing trains small auxiliary classifiers on a model's internal activations to test whether specific information is represented at specific layers. Neel Nanda's work on grokking used linear probes to detect sine and cosine representations in the embedding layer, confirming that the model had learned trigonometric features.

┌──────────────────────────────────────────────────────────────────┐

│ THE INTERPRETABILITY STACK │

│ │

│ Level 4: Full Model Behavior │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ "Why does the model write this essay this way?" │ │

│ │ Status: Almost no understanding │ │

│ └──────────────────────────────────────────────────────┘ │

│ ▲ │

│ Level 3: Modules │ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ "How do these circuits work together?" │ │

│ │ Status: Studied only in toy models │ │

│ └──────────────────────────────────────────────────────┘ │

│ ▲ │

│ Level 2: Circuits │ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ "What pathway computes two-digit addition?" │ │

│ │ Status: A few mapped in production models │ │

│ └──────────────────────────────────────────────────────┘ │

│ ▲ │

│ Level 1: Features │ │

│ ┌──────────────────────────────────────────────────────┐ │

│ │ "What does this activation pattern represent?" │ │

│ │ Status: Thousands extracted via sparse autoencoders │ │

│ └──────────────────────────────────────────────────────┘ │

└──────────────────────────────────────────────────────────────────┘

Each level of understanding enables the next. You cannot map circuits without first identifying features. You cannot understand modules without mapping their constituent circuits. And you cannot claim to understand a model without understanding how its modules interact to produce complex behavior.

The field is currently strongest at Level 1 and weakest at Level 4. Closing that gap is one of the most important open problems in AI research.

🧩 The Lego Analogy: Semantic Primitives in Evolutionary AI

Mechanistic interpretability is not only about understanding existing models — it is shaping how we build the next generation of AI systems. A striking example comes from evolutionary program synthesis, where LLMs evolve populations of programs through mutation and crossover to discover new algorithms and scientific solutions.

In March 2026, Sakana AI's Shinka Evolve system used frontier models from OpenAI, Anthropic, and Google as mutation operators inside an evolutionary algorithm, achieving state-of-the-art results on mathematical optimization problems. The system raises a question that sits squarely within interpretability's domain: when you merge two high-performing programs, how do you know the merge will work?

The answer connects to what researchers call the Lego analogy for intelligence. When Shinka Evolve performs a crossover between two parent programs from different evolutionary "islands," the LLM must identify which components of each program are semantically compatible before combining them. This is a first-order interaction — and it might not make sense to merge arbitrary components. A more principled approach would involve identifying semantic primitives — the fundamental building blocks of each solution — and understanding how they fit together, like Lego bricks with defined connection points.

| Approach | How Components Combine | Interpretability Required |

|---|---|---|

| Blind crossover | Copy code sections from two parents | None — hope for the best |

| LLM-guided merge | Use language understanding to find compatible structures | Surface-level — patterns in code |

| Semantic primitive composition | Identify fundamental building blocks and their interfaces | Deep — understand what each component means |

Shinka Evolve partially addresses this through a meta scratch pad — a running summary of insights extracted from all programs in the population, fed back into the system prompt. This creates a form of semantic memory: the evolutionary process accumulates not just better programs but better understanding of why they work. The scratch pad functions as an interpretability layer for the evolutionary search itself.

The deeper implication: as AI agents move from single-step tasks to open-ended discovery, interpretability becomes not just a safety tool but a capability amplifier. An agent that understands the semantic structure of its solutions — not just their fitness scores — can combine ideas more intelligently, avoid redundant exploration, and build on genuine insights rather than lucky accidents.

This mirrors the progression in mechanistic interpretability research itself. Early work on grokking showed that neural networks can discover mathematical structures (trigonometric identities) through gradient descent. Evolutionary AI extends this: LLMs can discover algorithmic structures through mutation and selection. In both cases, understanding the internal representations — whether through sparse autoencoders or meta scratch pads — transforms opaque optimization into interpretable discovery.

🛡️ Why Interpretability Matters for AI Safety

Understanding how AI works is not an academic luxury — it is an AI safety imperative. As models become more capable and are deployed in higher-stakes settings, the inability to explain their behavior creates risks that no amount of behavioral testing can fully address.

Verifying Alignment

The core problem of AI alignment is ensuring that AI systems do what we intend. But how do you verify intention in a system you cannot understand? A model might produce correct, helpful outputs during testing while harboring internal representations that would lead to harmful behavior in edge cases. Without mechanistic interpretability, we are relying on behavioral sampling — testing a tiny fraction of possible inputs and hoping the model behaves well on the rest.

Mechanistic interpretability offers a path to stronger guarantees. If we can identify the circuit responsible for a model's decision to refuse harmful requests, we can verify that the circuit is robust, test it against adversarial inputs, and monitor it during deployment. This is the difference between "we tested it and it seems safe" and "we understand why it is safe."

Detecting Deception

One of the most concerning scenarios in AI safety is deceptive alignment — a model that appears aligned during training and evaluation but pursues different objectives when deployed. Behavioral testing alone cannot reliably detect this, because a sufficiently capable model could learn to recognize when it is being tested.

Mechanistic interpretability could, in principle, detect deception by identifying internal representations that differ from the model's stated outputs. If a model claims to be helpful but has internal features representing deceptive strategies, interpretability tools could flag the discrepancy.

Predicting Failure Modes

Current AI systems fail in unpredictable ways. A model might handle 99.9% of inputs correctly but produce catastrophically wrong outputs on specific edge cases — and we often cannot predict which edge cases will cause failures until they occur in production.

Understanding the internal circuits responsible for a behavior allows researchers to identify the conditions under which those circuits break down. This is analogous to how understanding the stress tolerances of a bridge (through structural engineering) lets you predict when it will fail — rather than waiting for it to collapse.

Building Institutional Trust

For organizations deploying AI agents in production, interpretability research provides the foundation for trust. Regulatory frameworks like the EU AI Act increasingly require explanations for AI decisions in high-risk domains. Teams using multi-agent systems in healthcare, finance, or legal settings need more than "the model usually gets it right."

🏢 Interpretability in Practice: What It Means for Teams

Mechanistic interpretability is a research frontier, but its implications reach every team deploying AI today. The question is no longer whether to care about how AI works internally, but how to make responsible choices given the current state of understanding.

Choosing AI Providers Based on Transparency

Not all AI companies invest equally in understanding their own models. Anthropic, the company behind Claude, has the largest dedicated interpretability team in the industry and publishes its findings openly. This research directly improves the safety and predictability of the models that teams deploy. When evaluating AI tools for your team, the provider's commitment to interpretability research is a meaningful signal about the reliability of their models.

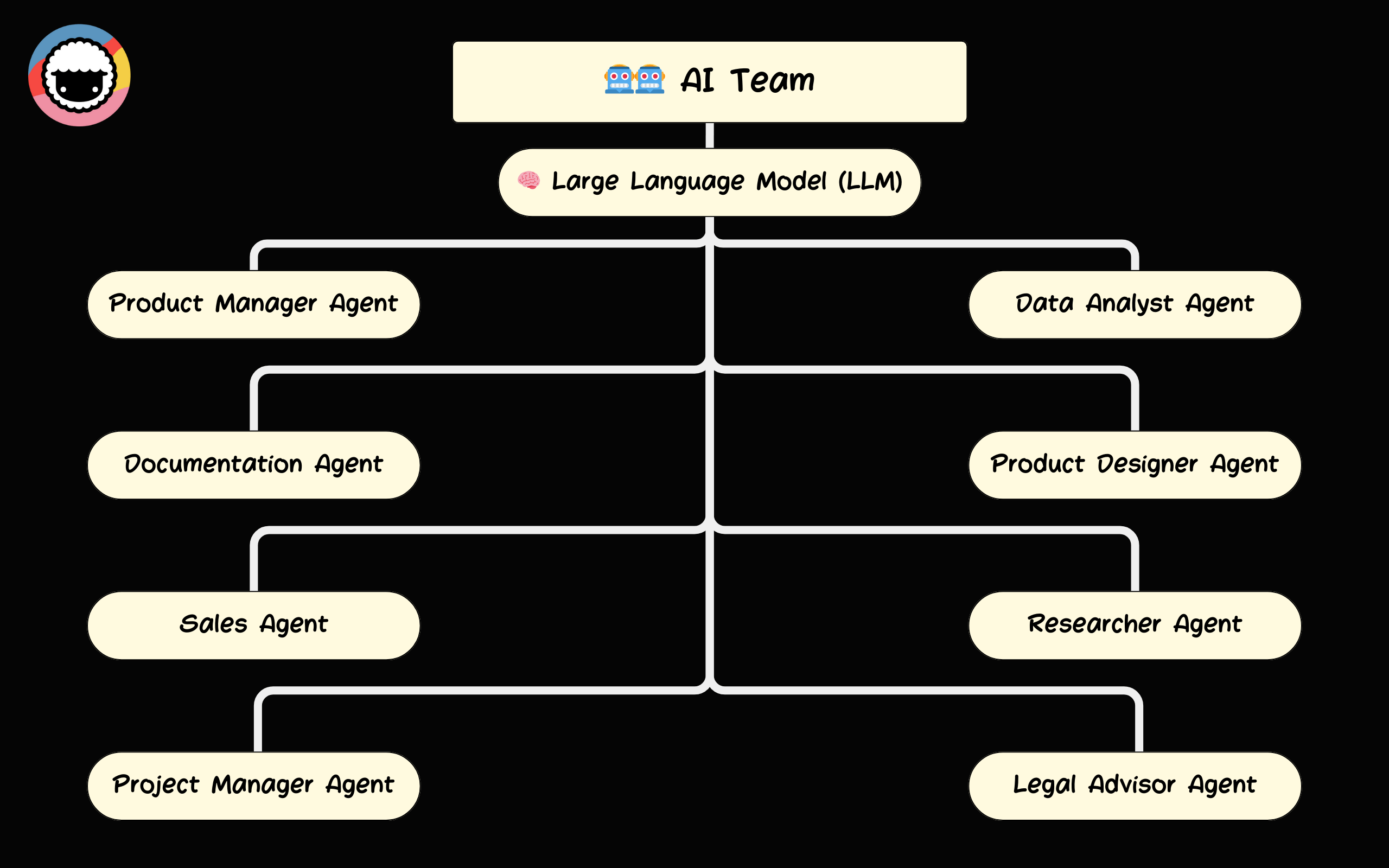

Multi-Model Architecture as a Safety Strategy

Taskade supports 15+ frontier models from OpenAI, Anthropic, and Google — including models from the teams leading interpretability research. This multi-model architecture gives teams practical advantages:

- Model selection based on task sensitivity: For high-stakes decisions, teams can route to models from providers with the strongest interpretability research and safety track records.

- Cross-model verification: Running the same task through multiple models and comparing outputs provides a behavioral check that complements (though does not replace) internal interpretability.

- Future-proofing: As interpretability research advances and produces safer models, teams on Taskade's platform can adopt those models immediately without changing their workflows.

Human-in-the-Loop by Design

Interpretability research reinforces a design principle that Taskade's AI agents already embody: humans should remain in the loop. Until we can fully understand and verify AI reasoning through mechanistic interpretability, human oversight is the critical safety layer.

Taskade implements this through:

- 7-tier RBAC (Owner, Maintainer, Editor, Commenter, Collaborator, Participant, Viewer) — controlling who can deploy and modify AI agents

- Workspace-scoped training — agents only access data within their designated workspace, limiting the blast radius of any unexpected behavior

- Workspace DNA — Memory (projects as persistent knowledge), Intelligence (AI agents with 33 built-in tools), and Execution (automations with 100+ integrations) — with human oversight at every stage of the loop

- Transparent agent actions — every action an AI agent takes in Taskade is logged and visible to the team, providing the behavioral transparency that complements ongoing interpretability research

The Workspace DNA architecture — where Memory feeds Intelligence, Intelligence triggers Execution, and Execution creates Memory — is designed so that humans can inspect and intervene at each handoff point. This is the practical application of interpretability principles: even when we cannot fully explain how a model arrives at a decision, we can control what it is allowed to do with that decision.

🔮 The Road Ahead: What We Still Do Not Know

Mechanistic interpretability has produced genuine breakthroughs, but intellectual honesty demands acknowledging how far the field has to go.

Current Limitations

Scale: The fully understood grokking model has a single layer and processes numbers from 0 to 112. Production large language models have hundreds of layers, billions of parameters, and process the full complexity of human language. The gap between what we can explain and what we ship is enormous.

Complexity: Even the behaviors we can explain in production models — two-digit addition, line breaks, poetry rhyming — required months of work by expert researchers. Explaining complex behaviors like multi-step reasoning, creative writing, or agentic decision-making may be qualitatively harder, not just quantitatively harder.

Superposition: Models pack far more concepts into their neurons than there are neurons. Sparse autoencoders help decompose this, but we do not yet know whether they capture all the relevant features or miss important ones. The features we find might be the "easy" ones, while the features driving the most interesting behaviors remain hidden.

Promising Directions

Scaling sparse autoencoders: Anthropic and other labs are training larger SAEs on larger models, extracting millions of features rather than thousands. More features means finer-grained understanding of what models represent internally.

Automated circuit discovery: Instead of manually tracing circuits (which is how most current work proceeds), researchers are developing automated tools that can identify computational pathways at scale. This could accelerate the field by orders of magnitude.

Causal abstraction: A theoretical framework that formalizes the relationship between high-level descriptions of model behavior (e.g., "the model is checking if the number is prime") and low-level computational mechanisms (specific neurons and weights). This bridges the gap between Levels 2 and 3 of the interpretability stack.

Developmental interpretability: Studying how models change during training — inspired by grokking — to understand when and why specific capabilities emerge. This could help predict when models will develop new (and potentially unexpected) abilities.

Physics-informed interpretability: In 2020, researchers at the IAIFI (NSF AI Institute for Artificial Intelligence and Fundamental Interactions) discovered that the statistics of wide neural networks are mathematically identical to quantum field theory. In quantum physics, particles are disturbances in quantum fields whose values fluctuate randomly — and in the simplest case (no interactions), those fluctuations follow a Gaussian distribution. Remarkably, very wide neural networks with random weights produce the exact same Gaussian statistics. Real neural networks (finite width) deviate from this — and those deviations are analogous to particle interactions, computable using the same Feynman diagrams physicists use to study quarks and electrons. This correspondence means that physicists' century-old toolkit — energy landscapes, phase transitions, renormalization group theory — may provide the mathematical language to describe what neural networks are actually computing. It is a completely new avenue for tackling the interpretability problem, bringing the rigor of fundamental physics to the study of AI.

The Long-Term Vision

The ultimate goal of mechanistic interpretability is to understand AI systems well enough to make them genuinely trustworthy. Not "trust because the benchmark scores are high" but "trust because we can verify the model's reasoning is sound."

This is not just a technical aspiration. It is an ethical one. As AI systems take on more responsibility — making medical recommendations, writing legal documents, managing automated workflows — the people affected by those decisions deserve to know that someone, somewhere, understands how those decisions are being made.

We are not there yet. We may not be there for years. But the researchers working on mechanistic interpretability are building the tools that will eventually get us there — one circuit, one feature, one manifold at a time.

Watch: Which AI model should you build with? — understanding model capabilities in Taskade Genesis.

❓ Frequently Asked Questions

What is mechanistic interpretability in AI?

Mechanistic interpretability is a research field that reverse-engineers neural networks to understand their internal computations. Researchers analyze the weights, activations, and circuits inside a model to identify how it processes information — rather than treating it as a black box. The field has produced discoveries like the Golden Gate Bridge feature in Claude and complete mechanistic explanations of how small transformers learn modular arithmetic.

Why is mechanistic interpretability important for AI safety?

Without understanding how AI systems work internally, we cannot verify they are safe or predict failure modes. Dario Amodei has called this lack of understanding unprecedented in the history of technology. Mechanistic interpretability provides tools to detect deceptive behaviors, verify alignment, and build AI systems whose reasoning can be inspected and validated.

What has Anthropic discovered about how Claude works internally?

Anthropic's interpretability team has identified a six-dimensional manifold in Claude Haiku for tracking character count in line-break decisions, mapped circuits for two-digit addition, discovered the Golden Gate Bridge feature in Claude 3 Sonnet, and traced poetry rhyming mechanisms. These findings show that models develop structured, interpretable internal representations — though they represent only a tiny fraction of the model's total capabilities.

What is the grokking phenomenon in AI?

Grokking is when a neural network suddenly transitions from memorizing training data to genuinely understanding the underlying pattern, often after thousands of additional training steps. Discovered by OpenAI researchers in 2022, grokking reveals that models can independently discover elegant mathematical solutions — like using trigonometric identities and Fourier transforms to solve modular arithmetic.

How does mechanistic interpretability differ from traditional explainable AI?

Traditional XAI methods like LIME and SHAP approximate model behavior from the outside, explaining outputs in terms of input features without examining internal computations. Mechanistic interpretability opens the model and analyzes the actual weights, activations, and circuits to understand how information is processed at a fundamental level. It is the difference between describing what a machine does and understanding how it works.

What are features and circuits in neural networks?

Features are internal representations corresponding to human-interpretable concepts — like the Golden Gate Bridge feature found in Claude 3 Sonnet. Circuits are connected pathways of features that implement specific computations, like adding two-digit numbers. Models pack many features into each neuron through superposition, making extraction and analysis challenging but essential for understanding model behavior.

Can we fully understand large language models through interpretability?

Not yet. Current mechanistic interpretability has explained only simple behaviors — two-digit addition, line-break formatting, basic rhyming — in production models. The complex capabilities of frontier LLMs (multi-step reasoning, creative writing, agentic behavior) remain fundamentally opaque. The field is progressing rapidly through techniques like sparse autoencoders and automated circuit discovery, but complete understanding of production models remains an open challenge.

How does Taskade benefit from interpretability research?

Taskade supports 15+ frontier models from OpenAI, Anthropic, and Google — including models from teams leading interpretability research. The multi-model architecture lets teams select models based on transparency and safety profiles. Combined with human-in-the-loop agent design, workspace-scoped training, 7-tier RBAC, and the Workspace DNA loop (Memory + Intelligence + Execution), Taskade enables responsible AI deployment grounded in the latest safety research. Get started free →

🚀 Build with AI You Can Trust

Mechanistic interpretability is revealing that AI models are not random number generators — they develop structured, meaningful internal representations. The field is young, but the direction is clear: transparency and understanding are the foundation of trustworthy AI.

While researchers work to open the black box, teams building with AI today can make choices that align with those values. Choose providers investing in interpretability. Keep humans in the loop. Design systems where AI actions are visible and controllable.

Taskade is built on these principles — AI agents with transparent actions, automations with human oversight, and a workspace architecture (Memory + Intelligence + Execution) that keeps your team in control at every step.

Ready to build with AI from teams doing the hard work of understanding?

👉 Browse the Community Gallery →

💡 Dive deeper into the AI intelligence cluster:

- What Is Grokking in AI? — The three phases, trigonometric solution, and excluded loss metric

- How Do LLMs Work? — Transformers, attention, and scaling laws explained

- What Is AI Safety? — Risks, alignment, and regulation

- What Is Artificial Life? — How intelligence emerges from code

- What Is Intelligence? — From neurons to AI agents

- From Bronx Science to Taskade Genesis — Connecting the dots of AI history

- They Generate Code. We Generate Runtime — The Genesis Manifesto

- The BFF Experiment — From Noise to Life